Your brain is far more powerful than any computer you can find at controlling everything you do. This intricate organ continuously analyses data while you sleep and uses neurons to deliver messages. To develop a digital replica, scientists are working to better understand the brain. But can computers perform the same tasks as our brains can?

We need to develop something called an artificial neural network, which connects digital neurons into a sophisticated net that resembles the structure of the brain, to enable computers to perform these tasks. The greatest universal language—mathematics—is what we need to create artificial neural networks.

In the domains of AI, machine learning, and deep learning, neural networks enable computer programmes to identify patterns and resolve common issues by mimicking the behaviour of the human brain.

What is ANN?

Artificial neural networks (ANNs) are computer programmes with biological influences that mimic how the human brain processes information. ANNs learn (or are trained) through experience rather than through programming, and they learn by identifying patterns and relationships in data.

The neural structure, organised into layers, is made up of hundreds of single units, known as artificial neurons or processing elements (PE), coupled by coefficients (weights). The network of connections between neurons is what gives brain calculations their power. Each PE has a single output, a transfer function, and weighted inputs. The transfer functions of a neural network’s neurons, the learning rule, and the design itself all affect how the network behaves.

In this sense, a neural network is a parameterized system because the weights are the variables that may be changed. The activation of the neuron is the result of the inputs’ weighted sum. To create a single neuron output, the activation signal is transferred through a transfer function.

The network becomes non-linear due to the transfer function. The inter-unit connections are improved during training until the prediction error is minimised and the network gets the required degree of accuracy.

New input data can be sent to the network after training and testing to help it predict the output. The transfer functions of their neurons, the learning rule, and the connection formula may all be used to explain different types of neural networks, and new ones are developed every week.

Basic Structure of ANNs

The concept behind ANNs is based on the premise that by forming the appropriate connections, silicon and wires may be used to simulate the real neurons and dendrites found in the human brain.

86 billion neurons, or nerve cells, make up the human brain. Axons connect them to a million additional cells. Dendrites absorb input from sensory organs as well as stimuli from the outside environment. Electric impulses are produced by these inputs and quickly pass through the neural network. A neuron has the option to either forward the message to another neuron for handling or to stop doing so.

Numerous nodes make up ANNs, which mimic the biological neurons found in the human brain. The links that connect the neurons allow them to communicate with one another.

The nodes have the ability to take input data and apply straightforward operations to it. Other neurons get the outcome of these operations. Each node’s output is known as its activation or node value.

Weight is connected to each link. ANNs have the ability to learn, which happens through changing the weight values.

Neural Networks vs. Deep Learning

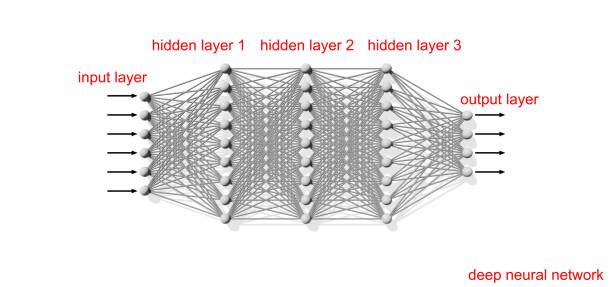

It can be misleading because the terms “deep learning” and “neural networks” are frequently used interchangeably in speech. It’s important to remember that the “deep” in deep learning just denotes the number of layers in a neural network. A neural network with more than three layers, including the inputs and outputs, is referred to as a “deep learning algorithm.” Simply put, a simple neural network is one that just contains two or three layers.

Types of Neural Networks

Diverse types of neural networks with different applications can be categorised. Although not an exhaustive list, the following would serve as an example of the most typical neural network types you’ll see for its most typical use cases:

We’ve mostly been concentrating on feedforward neural networks, or multi-layer perceptrons (MLPs), in this post. They consist of an output layer, one or more hidden layers, and an input layer. Because most real-world issues are nonlinear, sigmoid neurons, not perceptrons, are used in these neural networks, which are also sometimes referred to as MLPs. These models are the basis for computer vision, natural language processing, and other neural networks, and data is typically input into them to train them.

Similar to feedforward networks, convolutional neural networks (CNNs) are used for image recognition, pattern identification, and/or computer vision. To find patterns in a picture, these networks use ideas from linear algebra, particularly matrix multiplication.

The presence of feedback loops identifies recurrent neural networks (RNNs). When using time-series data to predict future events, such as stock market projections or sales forecasting, these learning algorithms are generally used.

Read more: 5 Types of Artificial Neural Networks

Applications of ANNs

Artificial neural networks are developed to simulate the human brain digitally. Artificial neural networks can be used in a variety of applications, such as data classification, result prediction, and data clustering. The networks can classify a given data set into a predetermined class as they process and learn from the data. They can also be taught to anticipate the predicted outputs from a given input and can detect a specific feature of the data and then classify the data by that special feature.

These networks can be used to create the next generation of computers because they are already being used for complicated analysis in a variety of disciplines, from engineering to medicine.

Google’s “watch next” recommendations for YouTube videos and Google Photos are both powered by a 30-layered neural network. Facebook’s DeepFace technology, which has a 97% success rate in identifying specific faces, uses artificial neural networks. Additionally, Skype’s ability to perform real-time translations is powered by an ANN.

The gaming sector already heavily relies on artificial neural networks. They help us identify handwriting, which is helpful in fields like banking. In the area of medicine, artificial neural networks are also capable of many crucial things. They might be utilised to create human body models that would aid physicians in correctly diagnosing ailments in their patients. Furthermore, complicated medical pictures like CT scans may now be interpreted more rapidly and precisely thanks to artificial neural networks.

Conclusion

Neural network-based machines will be able to figure out many abstract issues on their own. From their errors, they will grow. Maybe one day, a device known as a brain-computer interface will allow us to connect people to machines! This would translate mental cues from people into signals that robots could respond to. Maybe in the future, all of our interactions with the environment will be mental.

Before you go…

Hey, thank you for reading this blog to the end. I hope it was helpful. Let me tell you a little bit about Nicholas Idoko Technologies. We help businesses and companies build an online presence by developing web, mobile, desktop and blockchain applications.

As a company, we work with your budget in developing your ideas and projects beautifully and elegantly as well as participate in the growth of your business. We do a lot of freelance work in various sectors such as blockchain, booking, e-commerce, education, online games, voting and payments. Our ability to provide the needed resources to help clients develop their software packages for their targeted audience on schedule is unmatched.

Be sure to contact us if you need our services! We are readily available.